If you set your output to an image type, it uses detected tonal areas from earlier frames to apply the adjustment to tonal areas, to keep it consistent. It also only applies an adjustment to the areas of the data that exist in the frame, since most frames have a little data from earlier frames and a little that matches the next few frames. Video enhancer uses similar starting points but only makes one or two comparisons before applying the adjustment. With two sliders, you adjust how the final output is handled, not the algorithm itself, which is handled only by the machine-learning-based process. You can still adjust the blur and the noise level generated in Gigapixel, which really just determines how it weights motion edges and the depth of contrast noise removal, not color noise. It also used a DAT file, or a basic data file, which I can only assume was marking the different identified areas of the image before applying the algorithm for each detected area. I know because I ran tests, and monitored my system. Gigapixel works with single images by creating multiple tests and comparing, which is very similar to the original machine learning process used to generate the initial settings used (statistical mappings for tonal and shape areas that are detected). The difference is in how they re-implement the same process afterward. Gigapixel and Video Enhancer use settings derived from machine learning to perform the scaling of images. I didn't check about the interlaced chroma upscaling bug to see if it was fixed Topaz fixed a bunch of bugs with the RGB conversion, it should be ok now for 601/709. Flags today are still much less important than the 709 for HD assumption You should cover your bases, because SD color and flags for HD resolution will cause trouble in many scenarios - web, youtube, many portable devices, NLE's, some players. It might be ok in a few specific software players.

But the reverse is not true, SD flagging 601/170m/470bg/ etc. If you had to choose one, the actual color change with colormatrix (or similar) is drastically more important than flags You can get away with applying colormatrix transform without flagging (undef) and it will look ok in 99% of scenarios. (an early version I tested with did not) Not what I said.įlagging is best practices - it's not necessary, but ideal.Īpplying the colormatrix transform is far more important today and yesterday.

Ps.: no clue whether topaz know properly adjusts the color matrix and flagging. it is 'best practice' to convert the color characteristics (and adjust the flagging accordingly) when converting between HD and SD.īut it this conversion is not needed if your playback devices/software properly honors the color flags. flagging according to the color characteristics is necessaryī. (Some players also did not always honor tv/pc scale flagging which is why it was 'best practice' to stay in tv scale or convert to tv scale at an early stage in the processing of a source in case it wasn't tv scale.)Ī. Yes, it's best practice which was introduced since a lot of players did not honor the flagging of a source but either always used 601 or 709 or if you were lucky at least used 601 for SD and 709 for HD. When you perform a HD => SD conversion, you typically perform a 709=>601 colormatrix (or equivalent) conversion. When you upscale SD=>HD, typically you perform a 601=> 709 colormatrix or similar conversion. I would post in another thread or selur's forum, because this is the wrong thread to deal with those topics Usually HD is "709" by convention, otherwise the colors will get shifted when playing back the HD version Usually the filter argument overrides the frame propsĪlso, there is no 601=>709 matrix conversion. I don't use hybrid, but the ffmpeg demuxer also makes a difference -f vapoursynth_alt is generally faster than -f vapoursynth when more than 1 filter is usedĪlso "DV" is usually BFF, and you set FieldBased=1 (BFF), but you have TFF=True in the QTGMC call. Ideal settings are going to be different for different hardware setups You have to switch out filters, play with settings, remeasure. You can use vsedit's benchmark function to optimize the script. When i check on a windows laptop, it's a few times slower using iGPU than discrete GPU or CPU (in short, iGPU OpenCL performance is poor) Is it a discrete GPU or Intel GPU on the Macbook ? If it's Intel GPU, I suspect znedi3_rpow2 might be faster in your case than OpenCL using nnedi3cl_rpow2.

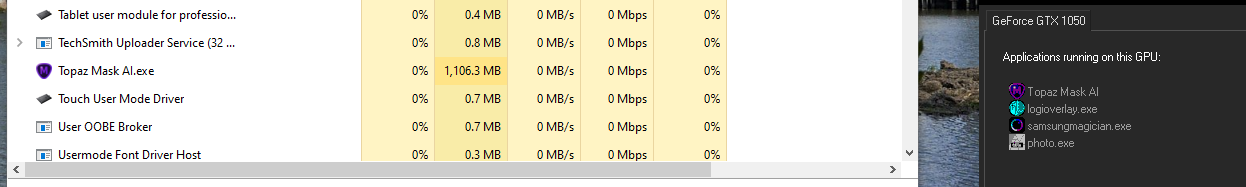

It might be your GPU's openCL performance causing a bottleneck. What is your CPU and GPU % usage during an encode? Hybrid took 30 minutes to output the file. Just tried a 40 second DV clip to HD with modified settings from above:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed